.png)

Twitter Bot Comment: Drive Leads, Avoid Spam

Most advice about a twitter bot comment is too simple to be useful. It says all bots are spam, all automation is risky, and the only safe move is to avoid it completely.

That advice misses how people use X for business. Founders, SDRs, consultants, and growth teams don't need random replies sprayed across the platform. They need a way to show up in the right conversations, at the right time, without turning their brand into thread pollution. The divide isn't bot versus human. It's brute-force automation versus controlled, contextual assistance.

That difference matters because automation already shapes visibility on the platform. A Pew Research Center analysis of tweeted links found that suspected bots shared 66% of all tweeted links to news and current events sites. If automation influences what gets seen at that scale, B2B teams need a better framework than "never automate anything."

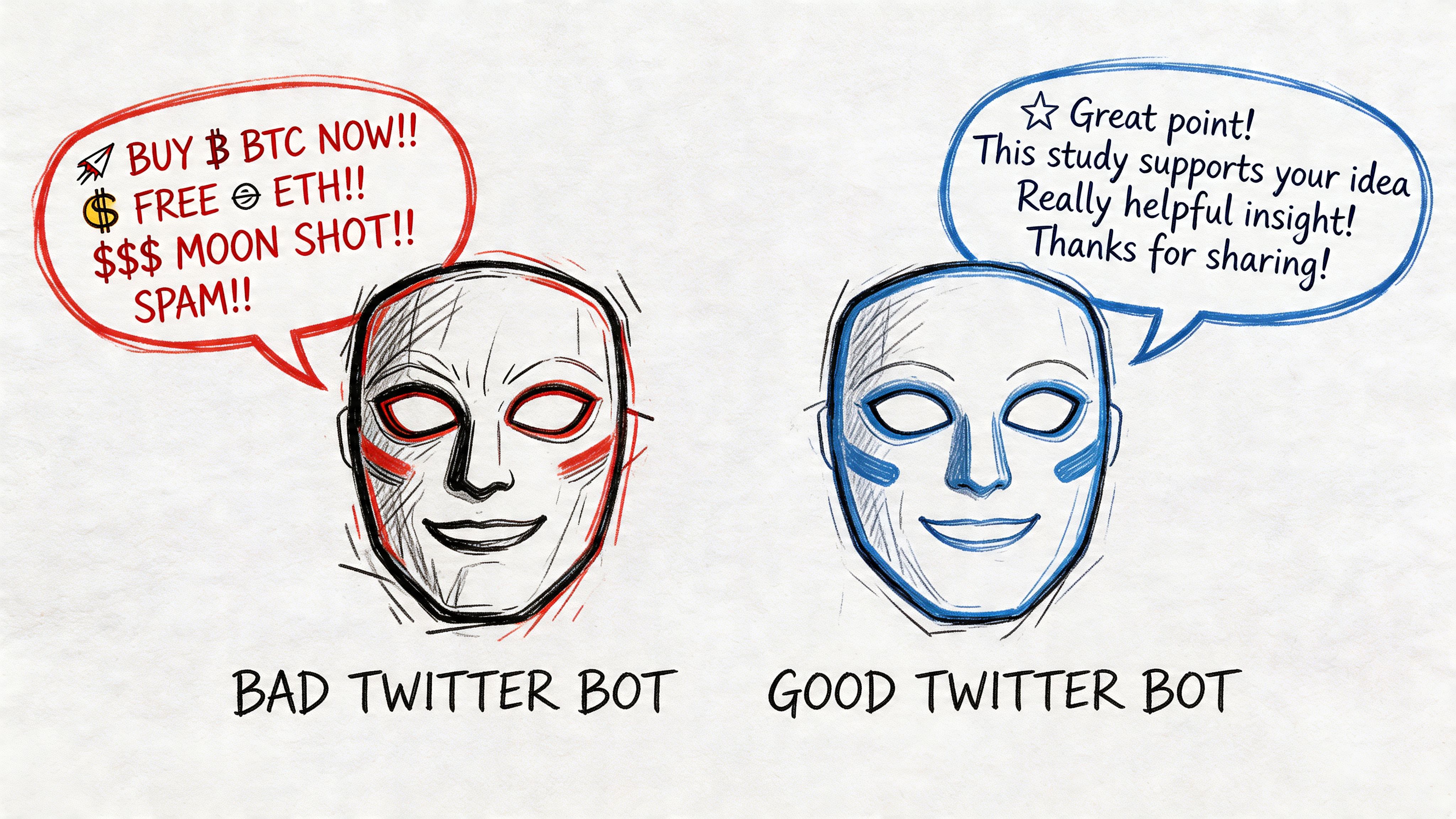

The Two Faces of the Twitter Bot Comment

The bad reputation is deserved. Everyone has seen the low-grade version of a twitter bot comment. Generic praise. Irrelevant replies. Crypto bait. Posts that say "Amazing insight" under a tweet about layoffs, product failures, or a sensitive news event.

Those bots don't build pipeline. They expose the account owner as lazy, damage trust, and train users to ignore replies that look even slightly automated.

What spam bots get wrong

A spam bot usually has three problems:

- It comments without context and treats every tweet like the same opportunity.

- It optimizes for volume alone so the account becomes recognizable for repetition.

- It mistakes visibility for demand and never turns replies into actual conversations.

This is why most anti-bot advice feels correct. Most bots people encounter are bad ones.

What strategic automation looks like

A more useful model is to treat automation as an assistant, not a replacement for judgment. In practice, that means software can monitor keywords, creators, and posting windows faster than a person can. But the brand still needs guardrails about where to comment, how to sound, and when to stay silent.

Practical rule: If the system can't tell the difference between a buying-signal post and a sensitive thread, it shouldn't be posting on your behalf.

That gray area is where ethical comment automation lives. A good system doesn't try to impersonate a person in every possible thread. It helps a person or team participate more consistently in the conversations they already care about.

For B2B lead generation, that can be useful. A thoughtful early reply on a relevant creator's post can drive profile visits, earn recognition, and open DMs. A sloppy one can do the opposite in public.

The point isn't to defend every bot. It is to separate spam behavior from strategic engagement behavior. If you don't make that distinction, you'll either over-automate and get burned, or avoid a channel that still rewards timely interaction.

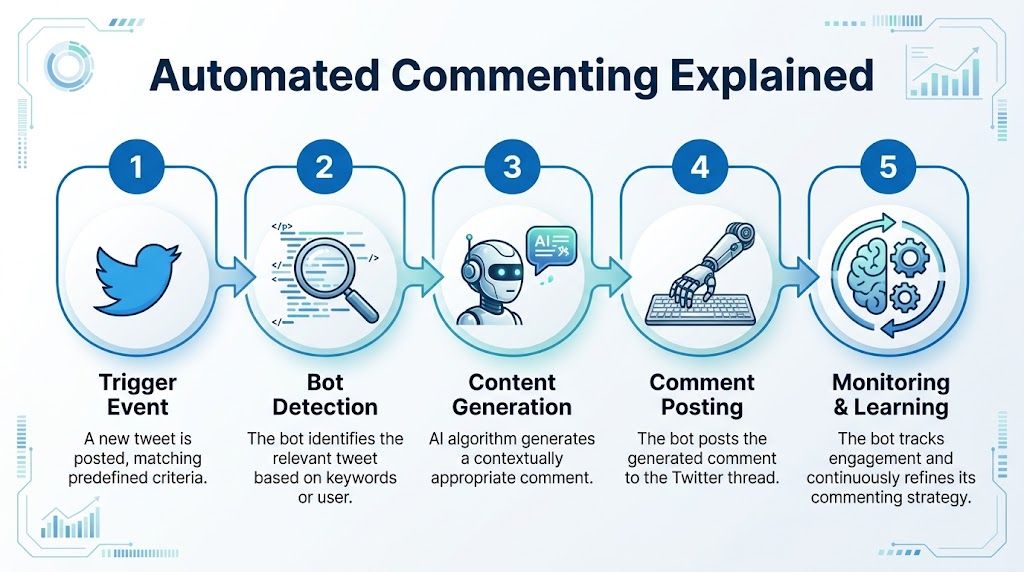

How Automated Commenting Actually Works

A twitter bot comment is often imagined as a crude script firing off canned replies. That's one version. The more capable setup looks closer to a workflow engine with filters, prompts, timing controls, and review rules.

The basic operating flow

At a high level, the system does five jobs:

Listen for triggers

It watches for tweets from specific creators or tweets that match selected keywords, phrases, or Boolean filters.Decide whether the post qualifies

Better tools diverge from cheap scripts on this point. They don't just match a word. They try to screen for relevance.Generate a response

The platform sends tweet context into a language model and asks for a reply that fits the account's tone, audience, and intent.Delay and publish

A good system spaces activity out so it doesn't look mechanical.Track what happened

Teams review which replies earned profile visits, likes, follow-backs, or actual conversations.

The technical stack behind it

A common implementation uses Twitter API v2 to search for tweets and then calls an AI model to write the reply. A public GitHub example shows the pattern clearly: keyword search in, AI-generated comment out, then reply posting through the platform API, all while staying inside posting limits and timing constraints. The same implementation notes that high-velocity commenting can trigger shadowbans that reduce reach by over 70% within 48 hours, and that posting limits can be around 300 posts per 3 hours depending on auth context and setup, which is why randomized delays matter in the first place, as shown in this twitter comment bot implementation example.

If you're evaluating tools or building your own workflow, it helps to understand the practical trade-offs behind an unofficial X API workflow. The API connection itself isn't the hard part. The hard part is handling relevance, rate limits, retries, content quality, and account safety together.

Why simple scripts usually fail

The clumsy version of automation acts like a factory machine with one motion. It sees a keyword and posts a reply. It doesn't understand whether the thread is a joke, a complaint, a breaking news event, or a customer support issue.

That creates obvious problems:

- Tone mismatch under serious or emotional posts

- Repeated phrasing across multiple threads

- Weak targeting because one keyword can mean several things

- No memory of what already worked or failed

The difference between useful automation and spam isn't the presence of AI. It's whether the system applies constraints before it posts.

What a safer setup includes

A practical setup usually includes some combination of:

- Creator targeting for accounts your buyers already follow

- Boolean keyword logic to narrow intent

- Prompt rules for tone, length, and prohibited topics

- Approval options for higher-risk threads

- History tracking so you don't repeat yourself publicly

This is why the "just use a free script" mindset usually goes sideways. Posting the comment is the easy part. Posting the right comment, in the right thread, at a sustainable pace, is the actual work.

The Unspoken Risks of Automated Engagement

The upside gets attention. The downside usually arrives later, in the form of a restricted account, a bad screenshot, or a month of activity that produced nothing useful.

Platform risk is the obvious one

X doesn't need to "ban bots" in a dramatic public way for your strategy to fail. It can reduce your visibility, limit distribution, or flag behavior patterns that make your replies ineffective. That kind of failure is easy to miss because the account still looks active from the outside.

Teams running several accounts face an extra layer of operational risk. Device overlap, pattern duplication, and synchronized behavior can make separate accounts look coordinated in ways you didn't intend. That's why multi-account operators should understand how to manage multiple Twitter X accounts on one device without getting banned before scaling anything.

Brand risk is more dangerous

A bad automated comment doesn't disappear after it posts. People screenshot it, mock it, and attach your company name to it.

The problem isn't only obvious spam. It's also false familiarity, generic agreement, and comments that enter conversations where your brand doesn't belong. B2B buyers notice when a company treats public discussion as inventory.

Here is the reputational trap: an automated system can sound polished and still be wrong for the moment.

A comment can be grammatically perfect and strategically disastrous.

Some threads should be off-limits

Research on controversial online discussions found that bot replies can intensify polarization. In that study, bot-driven replies boosted user stance alignment by 28%, which means automated engagement can push people deeper into echo chambers in already heated discussions, according to this analysis of bots and stance formation in polarized Twitter debates.

For a B2B brand, that matters even if you aren't trying to be political. If your automation enters volatile threads because a keyword happened to match, your account can end up adjacent to conflict, outrage, or ideological pile-ons.

This short clip captures how quickly poor automation can look reckless in public:

Strategic risk is the quiet killer

The worst outcome isn't always a ban or backlash. Sometimes it's a system that posts every day and still doesn't create demand.

Common signs of strategic failure include:

- Comments attract low-fit attention instead of buyers

- Profile visits rise but conversations don't

- The team can't explain which topics generate qualified replies

- Nobody reviews the output, so weak comments keep compounding

That's why comment automation needs the same scrutiny as outbound, paid social, or email sequences. If it isn't moving the account toward relevant conversations, it's just busy work with extra risk.

A Framework for Safe and Strategic Commenting

It's not more automation that's required. What's needed are better constraints. The safest twitter bot comment strategy is selective, narrow, and supervised.

A useful starting point is to define what "good" looks like before any tool posts publicly. That means relevance first, speed second, and volume last. This matters even more because platform detection is getting tougher. A SpiderAF analysis cited in its write-up on business impact notes that, as of 2026, up to 40% of low-quality bot comments are shadowbanned, which is why repetitive posting patterns are a dead end in the long run, as discussed in this framework for bot risk and business impact on X.

Start with targeting discipline

Don't aim at "marketing," "sales," or another broad topic and hope the model figures it out. Choose a short list of creators, customer-language phrases, and product-adjacent terms that signal real interest.

Good targeting usually has these traits:

Specific buyers in mind

The account should know whose attention it wants.Clear exclusion logic

If a keyword often appears in jokes, politics, or support complaints, filter it out.A defined reason to comment

Teach the system what kind of post deserves engagement. Opinion. Question. Tactical thread. Product use case.

If you're comparing channels before you build this motion, it helps to spend time understanding platform lead generation capabilities so your team doesn't force an X strategy onto an audience that behaves differently elsewhere.

Keep the human in the loop

The best safety feature is still judgment. Not every post should get an instant reply, even if the targeting matched.

Use a manual approval queue when:

- the topic touches layoffs, funding stress, regulation, politics, or public conflict

- the model sounds too polished or too familiar

- the post references a sensitive event or unclear subtext

A tool such as PowerIn can automate monitoring by keywords and creators, generate context-aware replies, and support manual approval, brand-voice controls, and topic avoidance. Those safeguards matter more than the automation itself.

Field note: Human review shouldn't cover every comment forever. It should cover enough comments to teach the system where your brand belongs and where it doesn't.

Optimize for quality signals

Most spam bots chase output. Strategic commenters chase fit.

Use these operating rules:

| Characteristic | Spam Bot (High Risk) | Strategic Commenter (Low Risk) |

|---|---|---|

| Relevance | Broad keyword matching | Narrow keyword and creator targeting |

| Tone | Generic praise or canned text | Brand-guided, contextual language |

| Timing | Mechanical posting cadence | Varied timing with controlled delays |

| Topic selection | Comments anywhere it can | Avoids sensitive and controversial threads |

| Review process | No oversight | Human approval for risky cases |

| Goal | Raw visibility | Conversations with likely buyers |

Write prompts like policies

A prompt shouldn't just tell the model to "write a good reply." It should restrict behavior.

Give it rules such as:

- avoid agreement without substance

- don't ask for a DM in the first touch

- don't use emojis unless the account already does

- never comment on tragedy, politics, or personal conflict

- reference one concrete point from the original tweet

That policy mindset is what separates a professional system from a gimmick. If your prompt reads like a hype machine, your output will too.

Spotting Good and Bad Bots in the Wild

The fastest way to understand a twitter bot comment is to read replies with one question in mind: Did this add value to the thread, or only announce the account's existence?

Bad bots are usually easy to spot. They reply with generic approval, vague enthusiasm, or unnatural certainty. They post compliments that could fit any tweet from any industry.

What bad bots sound like

Common examples include replies like:

- "Great insight" with no reference to the post

- "Totally agree" when the original tweet asked a question

- promotional pivots that force a pitch into a non-commercial thread

- awkward urgency that sounds copied from cold outreach

These comments fail because they reveal the intent immediately. The account isn't participating. It's inserting itself.

What better bots do differently

The better category is quieter. These bots usually focus on one job and perform it consistently. Some reminder bots, accessibility bots, and utility bots are useful because people understand what they do and why they exist.

That principle carries into business use. A strategic automated commenter should behave more like an efficient assistant than a hype machine. It should notice the right post, draft a relevant reply, and stay within obvious social boundaries.

User reaction matters here. SpiderAF's article cites a 2022 study showing a 68% positive response rate to bots that feel relatable, human-like, and contextually relevant. That doesn't mean people want "more bots." It means people respond better when the interaction feels helpful instead of extractive.

Helpful automation doesn't hide behind clever wording. It earns tolerance by being relevant.

A simple test for evaluating replies

When you see an automated-looking reply in the wild, check it against this short rubric:

Specificity

Does it mention a real point from the tweet?Fit

Does the tone match the thread?Restraint

Does it avoid turning every interaction into a pitch?Intent

Does it help the discussion, or just farm attention?

A strategic bot can pass those tests often enough to be useful. A spam bot almost never does.

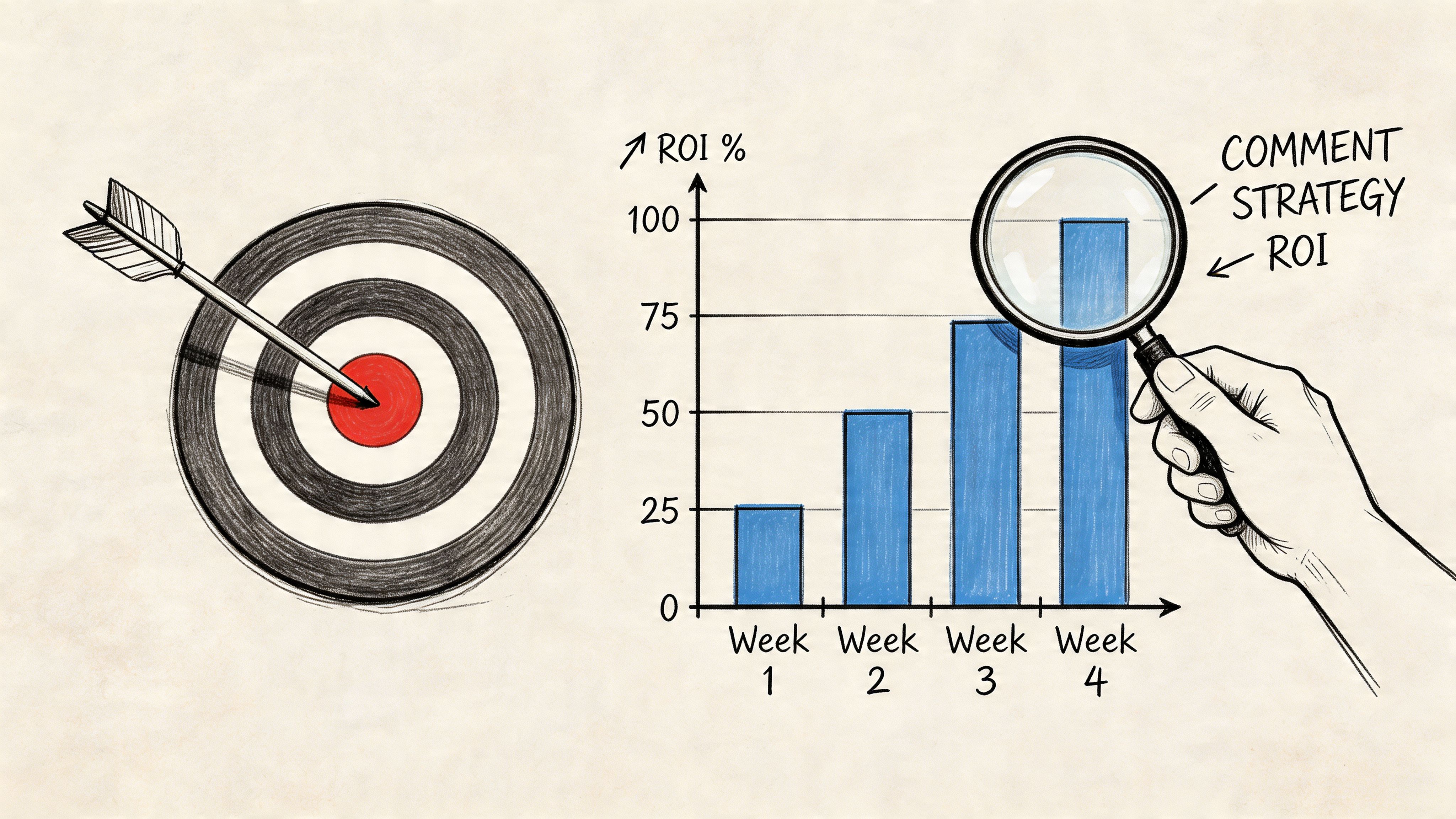

Measuring the ROI of Your Commenting Strategy

If you measure success by number of comments posted, you'll reward the wrong behavior. Volume is an activity metric, not a business outcome.

Track the path from comment to conversation

A practical scorecard for B2B teams looks like this:

Relevant profile visits

Are target accounts checking your profile after you comment?Follow quality

Are new followers likely buyers, partners, candidates, or peers in your niche?Conversation starts

Did the reply lead to a direct exchange, mention, or DM?Qualified lead signals

Did anyone ask about your offer, process, or use case?

Use your profile as the conversion layer

Comments attract attention, but your profile converts it. If someone clicks through and finds a vague bio, no clear offer, and a dead link, the value of your commenting drops fast.

It helps to create a custom URL for your Twitter bio so you can send profile visitors to a cleaner destination and track what happened after the click. That gives you a better read on whether your commenting motion is producing curiosity or actual intent.

Build a simple review loop

You don't need a complex dashboard at the start. A spreadsheet or CRM note field can work if the team stays disciplined.

Review weekly:

- which creators triggered the best conversations

- which keywords produced weak-fit traffic

- which replies started back-and-forth discussion

- which comments looked good on paper but produced nothing

If you're comparing software support for this process, a list of social media growth tools can help you evaluate which products handle workflow, tracking, and engagement history well enough for repeatable use.

Measure comments the way you measure outbound touches. By downstream movement, not by how many went out.

Frequently Asked Questions About Twitter Comment Bots

Can you still get banned if you follow best practices

Yes. Best practices reduce risk. They don't remove it. You're still operating on a platform that changes enforcement patterns, spam thresholds, and visibility rules over time.

The safer mindset is to ask, "Would this behavior still make sense if a human reviewed the account's replies one by one?" If the answer is no, the setup is too aggressive.

How is an AI commenting platform different from a simple script

A script can post replies. That's the smallest part of the problem.

A real operating system for commenting needs targeting logic, content controls, timing variation, review options, history, and exclusions. Without those layers, you're not running a strategy. You're automating a liability.

What's a realistic posting volume

There isn't one universal number that stays safe across every account, niche, and setup. The wrong way to approach this is to ask how much activity you can squeeze out before detection.

A better question is how many comments your account can post while staying relevant, varied, and believable. If quality drops when you increase volume, you've already crossed the useful limit.

Should you automate replies on controversial topics

No, not by default. Sensitive threads create more downside than upside for most B2B brands.

Even if the model can produce a polished response, your company gains little from entering unpredictable conversations where tone, context, and public reaction can shift quickly.

How do you choose the right keywords and creators

Start with buyer language, not industry buzzwords. Look for phrases prospects use when they describe a problem, compare tools, ask for recommendations, or react to a workflow issue.

Then choose creators whose audiences overlap with your market. If a creator gets high engagement but attracts the wrong crowd, visibility there won't help much.

What's the best use case for a twitter bot comment

The strongest use case is early engagement in relevant business conversations where your profile, offer, and expertise naturally fit. The weakest use case is mass visibility with no filtering.

If a comment can start a credible conversation, it's worth testing. If it only increases output, it probably isn't.

If you want a controlled way to test comment automation, PowerIn is built for AI-assisted engagement on X and LinkedIn with keyword and creator monitoring, contextual comment generation, and safeguards like manual approval and brand-voice controls. Use it like an assistant, not a spam engine. That's the difference between extra visibility and unnecessary risk.

.svg)

.png)