.png)

Twitter Auto Comment Bot: Scale Leads, Not Spam in 2026

You’re probably doing some version of this right now.

You open X, search a keyword tied to your market, skim a few threads, and try to leave comments that are smart enough to get noticed but not so polished that they read like sales copy. Then a prospect replies, you get pulled into DMs, and the rest of your outreach plan disappears for the day. Tomorrow you do it again.

That routine works, but it doesn’t scale cleanly. Manual engagement on X can create real pipeline, especially for founders, sales reps, recruiters, and consultants. The problem is that it asks for speed, consistency, and judgment at the same time. Many can manage two. Very few can maintain all three every day without burning hours.

The Endless Grind of Manual X/Twitter Engagement

The hardest part of X engagement isn’t writing a comment. It’s finding the right moment to write one.

A B2B founder might track phrases like “looking for a CRM,” “need a sales tool,” or “any recommendations for outbound.” A recruiter might monitor hiring discussions. A consultant might follow a shortlist of creators and buyers in a niche. In every case, the workflow is repetitive. Search, scan, judge intent, reply fast, repeat.

Why manual engagement breaks down

The first problem is timing. Good opportunities don’t wait around. A strong comment placed early on a relevant post can put your profile in front of the right people. A comment posted hours later often lands after the thread has already cooled off.

The second problem is context switching. You go into X to engage, then get dragged into feeds, side conversations, notifications, and research. What should’ve been a focused prospecting block becomes a scattered hour.

Then there’s the quality issue. Some days you’ll leave sharp, useful comments. Other days you’ll force it because you feel like you need to stay visible.

Manual commenting works best when your attention is fresh. Most people are doing it between calls, after meetings, or late in the day. That’s when quality slips.

What this costs in practice

The trade-off is simple. Every minute spent hunting for posts is a minute you’re not spending on sales calls, proposals, onboarding, or product work.

That doesn’t mean manual engagement is bad. It means it’s expensive.

A lot of teams respond by going to extremes. They either keep everything manual and accept inconsistency, or they install a random twitter auto comment bot and hope it saves time. That second path causes most of the horror stories people associate with automation.

Here’s the better way to consider it:

- Manual engagement is precise. You control tone, timing, and relevance.

- Crude automation is fast. It also produces obvious spam and account risk.

- Well-designed automation sits in the middle. It handles monitoring and first-pass response logic so you can spend your time on conversations that matter.

That middle ground is where serious lead generation happens. Not with spray-and-pray replies, and not with endless scrolling.

Understanding Twitter Auto Comment Bots

A twitter auto comment bot is software that watches for specific activity on X and posts replies based on rules, prompts, or templates. The simplest version looks for a keyword and fires off a canned response. The more advanced version evaluates the post, generates a relevant comment, and applies timing controls so activity looks less mechanical.

Think of it as a digital research assistant

The useful analogy is a digital research assistant.

It watches a set of conversations you care about, flags posts that match your criteria, and helps publish a response. That response might be fully automatic, partially approved by a human, or queued for review. The underlying job is the same. Reduce the manual effort required to notice and join relevant conversations.

That broad category includes very different tools.

At one end of the spectrum

You have crude bots that:

- Use generic templates like “Great post” or “Totally agree”

- Reply too often and too quickly

- Ignore context and post mismatched comments

- Rely on weak variation tricks that still feel repetitive

These are the bots people hate. They clutter threads and damage the account using them.

At the other end

You have more disciplined tools that:

- Monitor targeted keywords or creators

- Generate context-aware replies

- Limit activity within platform constraints

- Support manual approval and history review

- Focus on lead generation, not vanity spam

That distinction matters because X has a long history with automation. By 2020, studies indicated that approximately 15% of all Twitter accounts were bots, or around 45 million bot accounts out of an estimated 300 million active users, according to the n8n analysis of Twitter bot activity. That scale shaped platform enforcement and explains why any automated commenting strategy has to be built around restraint.

Why businesses use them anyway

The demand never disappeared because the use case is real. Sales teams want to catch intent signals early. Founders want to build visibility around a niche. Agencies want a repeatable way to stay present in client markets. Customer-facing teams want a faster way to engage mentions and questions.

A twitter auto comment bot isn’t good or bad in itself. It’s a mechanism. What matters is the logic behind it and the standards of the operator using it.

Practical rule: If the bot’s main promise is volume, it’s usually a bad sign. If the main promise is relevance, control, and timing, it’s at least worth evaluating.

The useful dividing line is this. Spam bots try to imitate attention. Professional engagement tools try to support it.

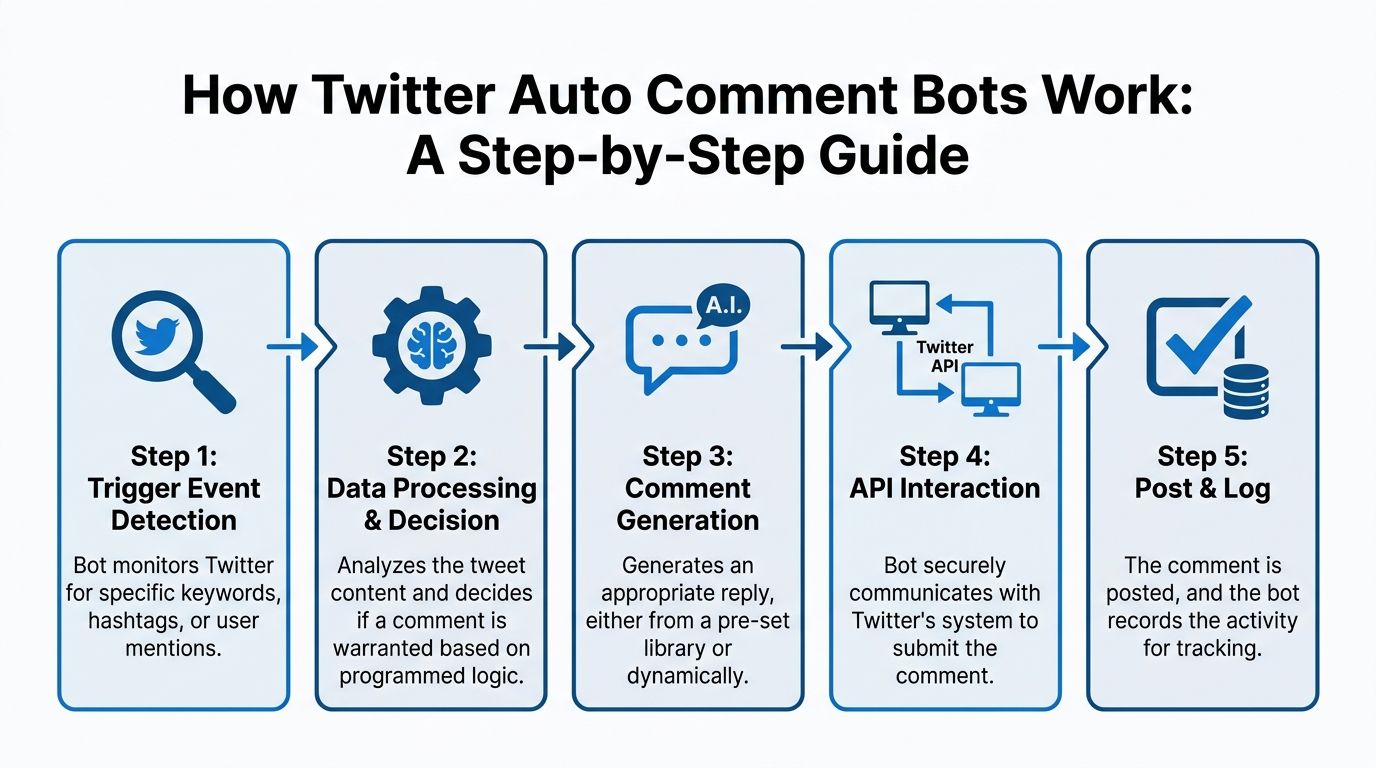

The Technical Mechanics of Auto Commenting

Most auto-comment systems follow the same basic workflow. They listen for new posts, decide whether a reply is appropriate, generate that reply, and send it through X’s infrastructure. You don’t need to be a developer to understand the moving parts, but you do need to know where the failure points are.

The core workflow

A typical bot starts with a trigger. That trigger might be a keyword, a hashtag, a mention, or a list of target accounts.

Then the system checks X for matching posts. Many implementations use Twitter API v2 for this. The bot runs a polling loop, waits, checks again, and repeats. According to the GitHub implementation notes for a Twitter comment bot, these bots typically use delays of 15 to 60 minutes to stay within rate limits such as 300 search requests per 15 minutes, and ignoring those constraints is a primary cause of account suspension.

What each step actually does

Listening

The bot queries X for new posts tied to a rule set. That rule might be broad, like an industry keyword, or narrow, like posts from selected creators.

Filtering

Not every match deserves a reply. Better systems exclude weak-fit posts, spam terms, or low-value chatter. In such cases, logic matters most.

Comment generation

The bot uses either templates or AI prompts to build a response. Templates are predictable. AI can be more flexible, but only if the prompts are strict enough to keep comments on-topic.

Posting

Once the reply is approved by the system, or by a user, it gets posted through an API call or a session-based method depending on the tool.

Logging

Mature setups record what was posted, when, and under what rule. Without logs, you can’t audit quality or spot patterns that may trigger account issues.

- Scheduled polling windows instead of constant checks

- Delays between actions instead of burst posting

- Backoff logic when requests fail

- Target constraints so replies stay narrow and relevant

- Empty praise that adds nothing to the thread

- Mismatched comments that clearly don’t fit the original post

- Immediate responses posted so fast they look impossible

- Identical tone across every topic, whether the post is serious, technical, or casual

- Can I control targeting tightly? Broad keyword matching usually creates junk.

- Can I review outputs? If not, bad comments will eventually go live.

- Does it pace activity? Fast, rigid posting is an obvious red flag.

- Can I audit history? No logs means no accountability.

- Would I be comfortable if every automated comment were shown to a prospect? If the answer is no, don’t run it.

- Profile visits from comments

- Replies that turn into direct conversation

- Qualified inbound messages

- Posts that consistently trigger interest

- Target narrowly so comments appear in the right conversations

- Adapt tone so replies match the context of the post

- Offer review controls for accounts that can’t afford public mistakes

- Keep a visible record so teams can audit what happened

- Avoid hyperactive patterns that create obvious account risk

- whether a topic is worth engaging at all

- whether a reply feels on-brand

- whether the conversation should move to DM

- whether a high-value thread deserves a custom manual response instead

- B2B founders building audience and pipeline at the same time

- Sales reps tracking intent signals and category conversations

- Recruiters staying visible in hiring-related threads

- Consultants and agencies who need consistent public proof of expertise

- Lean marketing teams that need repeatable engagement without adding headcount

Why rate limits matter more than most people think

The technical side isn’t just about getting comments out. It’s about controlling how often your system asks X for data and how often it publishes. If a bot checks too aggressively or posts in a rigid pattern, it creates detectable behavior.

That’s why disciplined setups use:

If you’re evaluating tools, it helps to understand the trade-offs between official access and workarounds. This overview of the unofficial X Twitter API is useful because it shows why many builders end up balancing reliability, access, and compliance rather than treating automation as a simple on-off switch.

If a tool can’t explain how it handles rate limits, action pacing, and failures, it’s not a serious tool. It’s a liability with a dashboard.

Where bots usually go wrong

The common failures aren’t mysterious.

| Failure point | What happens | Why it matters |

|---|---|---|

| Overbroad targeting | The bot replies to weak-fit posts | Relevance drops fast |

| Tight polling loops | Too many requests in a short period | Platform flags increase |

| Repetitive wording | Comments start looking machine-made | Trust drops with both users and platform |

| No approval layer | Bad comments go live unchecked | Reputation damage becomes public |

| No activity log | Teams can’t review patterns | Problems repeat |

A useful auto-comment setup doesn’t need to be complex. It needs to be controlled. Users seeking automation don’t need a bot that comments everywhere. They need a system that notices the right posts and behaves like a careful operator.

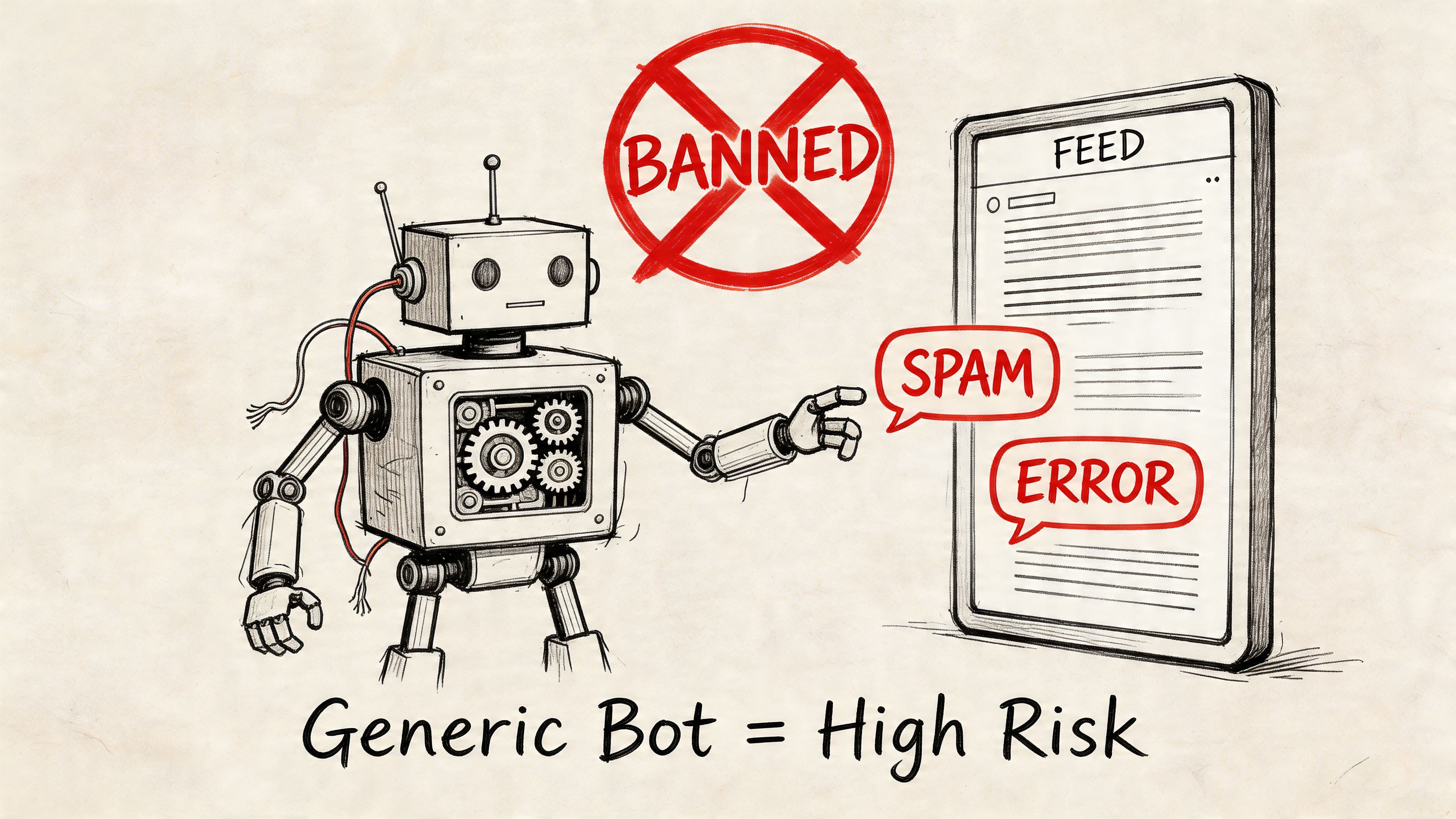

The High Risks of Using a Generic Auto Comment Bot

Most generic auto-comment bots fail in the same way. They optimize for output and ignore context, timing, and reputation. That makes them risky even before you get into platform rules.

A sales rep can recover from a weak post. Recovering from a pattern of bad automated comments is harder, because the damage spreads in three directions at once. X can restrict the account. Prospects can write you off. And your own team can lose trust in the channel because they no longer know whether poor results come from strategy or tooling.

Platform risk is real

Some operators think they can solve the spam problem with comment variation. They use SpinTax or similar templating so every reply looks slightly different. That helps at the surface level, but it doesn’t solve the deeper issue of mechanical behavior.

Commercial bots using those variation methods still face a 20-30% account suspension risk if they lack human-like timing and scheduling, according to the Chrome Web Store listing for a Twitter/X comment bot. The issue isn’t just repeated wording. It’s unnatural engagement patterns.

What bad automation looks like in the wild

You’ve seen these replies before:

That pattern tells both the platform and your market the same thing. The account isn’t participating. It’s injecting noise.

For a B2B brand, that’s expensive. If you sell a serious product, your comments are part of your first impression. A generic bot can make you look lazy, desperate, or careless.

Reputational damage usually comes before suspension

A lot of people obsess over bans and overlook the quieter problem. You can hurt your credibility long before the platform takes action.

Prospects don’t need a formal warning from X to decide your account isn’t worth engaging with. One tone-deaf reply under the wrong thread can make a founder, buyer, or recruiter ignore you permanently. In B2B, a comment is rarely “just a comment.” It signals taste, judgment, and whether you understand the room.

For a deeper look at how that risk shows up in comment automation, this breakdown of Twitter bot comment behavior and account exposure is worth reading before you connect any tool to your profile.

Here’s a useful walkthrough of the broader issue in video form:

Security is the underrated problem

The ugliest trade-off with low-end bots is often security.

A lot of browser extensions and unofficial tools ask for deep account access, session handling, or direct credentials. If the tool is sloppy, you’re not just risking awkward comments. You’re risking account compromise, unstable sessions, and unknown handling of your data.

That doesn’t mean every extension is unsafe. It means you need to treat access as a security decision, not a growth hack.

Security check: If a tool is vague about how it posts, how it stores access, or how you can review activity, assume you’re carrying more risk than the landing page admits.

A quick decision filter

Before using any twitter auto comment bot, ask:

The problem isn’t automation itself. The problem is crude automation in a channel where visibility and trust are linked.

Smart Automation Use Cases for Lead Generation

Used carefully, automation can make X a practical part of your pipeline instead of a side activity you squeeze in between tasks. The key is to treat commenting as a lead generation workflow, not a vanity exercise.

Effective, context-aware auto-commenting can drive up to 5x increases in profile visits and 20-30% boosts in overall engagement metrics like replies and likes, based on the Twitter AI Bot Chrome Web Store listing. Those outcomes don’t come from blasting generic comments. They come from relevance, timing, and selecting the right conversations.

Buying-intent capture

The cleanest use case is monitoring posts that signal a problem, need, or active search.

A founder posting frustration about outreach, hiring, analytics, scheduling, or CRM workflow is giving you a live opening. The right comment doesn’t pitch hard. It helps, clarifies, or adds a practical angle. That earns the profile visit, which is often the real first conversion on X.

Track these signals:

Authority building in a narrow niche

Some accounts don’t need thousands of comments. They need a steady stream of good ones in the right rooms.

If you sell to RevOps leaders, cybersecurity teams, recruiters, or agency owners, consistent comments under respected voices in that niche can shape how people perceive your expertise. The goal isn’t to dominate every thread. It’s to become a familiar, credible participant.

A good comment strategy should make people think, “I keep seeing this person say useful things,” not, “Why is this account everywhere?”

Automation supports consistency. It helps you stay present when the market is talking, especially during hours when you’re in meetings or focused on delivery.

Market intelligence and message testing

Auto-commenting can also improve research.

When you monitor competitors, category terms, and recurring objections, you start seeing the same pains surface in public. That gives you a live stream of language buyers use in their own words. Your team can use those phrases in positioning, outbound messaging, onboarding content, and follow-up questions.

If you’re building a broader system, this guide to a winning B2B marketing automation strategy is a useful companion because it frames automation as a coordinated demand-generation process rather than a pile of disconnected tools.

What to measure instead of chasing vanity

The wrong KPI for comment automation is “How many comments did we publish?”

A better scorecard looks like this:

| KPI | Why it matters |

|---|---|

| Profile visits | Indicates whether comments create curiosity |

| Meaningful replies | Shows comment quality, not just visibility |

| New relevant followers | Reflects audience fit |

| DMs or inbound asks | Strong signal of intent |

| Comment-to-conversation rate | Connects activity to pipeline |

If you want tooling built around that kind of workflow, this list of AI sales prospecting tools gives useful context on where comment automation fits relative to other prospecting channels.

Smart automation works when it narrows your focus. It should help you reach likely buyers faster, not tempt you to engage with everyone.

PowerIn A Secure Alternative for Human-Sounding Engagement

Most tools in this category force a bad choice. You either get manual work that doesn’t scale, or automation that behaves like spam. There’s a more practical model for B2B teams. Use software to monitor, filter, and draft comments, then add enough control that the output still feels like a professional account speaking in public.

That’s where PowerIn fits. It automates commenting on LinkedIn and X by monitoring selected keywords and creators, generating contextual replies, and supporting controls such as manual approval, history tracking, CSV export, local business-hour timing, and brand voice settings. For teams that want reach without handing their reputation to a crude bot, that structure matters.

What separates professional tooling from junk automation

The difference isn’t that one tool uses AI and another doesn’t. Plenty of bad bots now claim AI.

The difference is whether the product is built around restraint and relevance.

A professional setup should do a few things well:

That matters even more now because B2B teams expect automation to work across channels. If you’re already using a site-side ChatGPT chatbot for visitor engagement, the natural next step is making your social engagement workflow equally structured instead of improvising everything on X by hand.

Crude Bots vs. PowerIn A Strategic Comparison

| Feature | Generic Auto Comment Bot | PowerIn Engagement Platform |

|---|---|---|

| Targeting | Broad keyword matching, often noisy | Keyword and creator-based targeting with Boolean logic |

| Comment quality | Generic templates or weak variation | Contextual, human-sounding comment generation |

| Safety controls | Often limited or unclear | Manual approval and history tracking available |

| Brand fit | Same tone across topics | Tone, emojis, hashtags, and language can be tailored |

| Timing | Can look rigid or overly aggressive | Configurable timing with business-hour alignment |

| Operational visibility | Minimal auditing | Comment history and export options support review |

| Use case fit | Volume-first engagement | B2B lead generation and personal brand consistency |

Why this model works better

A founder or SDR doesn’t need infinite commenting capacity. They need reliable participation in a small set of high-value conversations.

That means the strongest feature often isn’t full automation. It’s selective automation. Let the platform monitor key phrases, surface relevant posts, generate a sensible first draft, and keep activity within a pattern that doesn’t scream “bot.” Then step in when human judgment is needed.

This is especially useful for teams with uneven schedules. If your best prospects post while you’re on calls, traveling, or offline, a controlled engagement system protects your visibility without forcing you into nonstop manual scanning.

Where human oversight still matters

No serious operator should pretend automation removes judgment. It doesn’t.

You still need a person to decide:

That’s the right relationship between software and operator. The tool handles monitoring and repetitive detection work. The human handles nuance, escalation, and relationship-building.

Good automation doesn’t replace your voice. It protects your time so your voice shows up more consistently where it counts.

Who should use this kind of tool

This model is a fit for people who already know X can produce business value but can’t justify living in the feed all day.

It’s especially useful for:

The important point is that a safer tool doesn’t make reckless strategy safe. You still need sharp targeting, sensible comment logic, and standards for what should never be automated. But the right platform gives you a workable operating system for engagement instead of a blunt instrument.

From Automation to Authentic Connection

The choice isn’t manual work versus bots. It’s crude automation versus disciplined automation.

Manual engagement will always have a place. Some replies should be written one by one. Some threads deserve custom thought. But forcing everything through manual effort usually leads to inconsistency, missed timing, and a social channel that never becomes a dependable source of leads.

On the other side, generic bots create their own mess. They post low-signal comments, expose accounts to restriction, and make brands look careless in public. That’s not scale. It’s borrowed activity with long-term costs.

The practical middle path

A better setup treats automation as support, not disguise.

Use software to monitor the right conversations. Let it help draft and schedule context-aware replies. Keep tight targeting. Review what matters. Stay inside sensible operating limits. Then do the human part well once a real conversation starts.

That’s how X becomes useful for B2B lead generation. Not because a machine replaces trust, but because it helps you show up more consistently where trust begins.

The first comment opens the door. The relationship still depends on what happens next.

If you’re serious about using a twitter auto comment bot, don’t judge the category by the shadiest tools in it. Judge it by whether the system helps you start relevant conversations without damaging the account doing the talking.

If you want to test a more controlled approach, try PowerIn. It’s built for teams that want to automate high-quality engagement on X and LinkedIn without falling into spammy patterns. You can use it to monitor targeted keywords and creators, generate contextual comments, and keep oversight through approvals and history tracking, so your social activity supports pipeline instead of creating risk.

.svg)

.png)